Residual standard error: 0.9392 on 6 degrees of freedom Lm(formula = scale(y) ~ scale(x1) + scale(x2) + scale(x3), data = df2) Let’s have a look at another example − Example the Mean Square Error (MSE) in the ANOVA table, we end up with your expression for se ( b ). The denominator can be written as n i ( x i x ) 2 Thus, se ( b ) 2 i ( x i x ) 2 With 2 1 n 2 i i 2 i.e.

Residual standard error: 1.044 on 8 degrees of freedom In my post, it is found that se ( b ) n 2 n x i 2 ( x i) 2.

Lm(formula = scale(y) ~ scale(x), data = df1) Multiple R-squared: 0.0309, Adjusted R-squared: -0.09024į-statistic: 0.2551 on 1 and 8 DF, p-value: 0.6272Ĭreating the regression model for standardized coefficients − > Model1_standardized_coefficients summary(Model1_standardized_coefficients) Output Call: So if you haven’t read my previous article about it’s derivation then I. The standard errors of the coefficients are in the third column. In this article I’m going to use a user defined function to calculate the slope and intercept of a regression line. Residual standard error: 1.453 on 8 degrees of freedom The engineer collects stiffness data from particle board pieces with various densities at different temperatures and produces the following linear regression output. ExampleĬreating the regression model − > Model1 summary(Model1) Output Call: We can find the standardized coefficients of a linear regression model by using scale function while creating the model. Standardization of the dependent and independent variables means that converting the values of these variables in a way that the mean and the standard deviation becomes 0 and 1 respectively. But before we discuss the residual standard deviation, let’s try to assess the goodness of fit graphically. (The other measure to assess this goodness of fit is R 2). Then, if possible, I would like to display the summary of the original linear regression but with the bootstrapped standard errors and the corresponding p-values (in other words same beta coefficients but different standard errors). How then do we determine what to do? We'll explore this issue further in Lesson 6.The standardized coefficients in regression are also called beta coefficients and they are obtained by standardizing the dependent and independent variables. The residual standard deviation (or residual standard error) is a measure used to assess how well a linear regression model fits the data. It may well turn out that we would do better to omit either \(x_1\) or \(x_2\) from the model, but not both. or the square root of the mean of the squared residual values. But, this doesn't necessarily mean that both \(x_1\) and \(x_2\) are not needed in a model with all the other predictors included. Helpful (1) If you want the standard deviation of the residuals (differences between the regression line and the data at each value of the independent variable), it is: Root Mean Squared Error: 0.0203. One test suggests \(x_1\) is not needed in a model with all the other predictors included, while the other test suggests \(x_2\) is not needed in a model with all the other predictors included. For example, suppose we apply two separate tests for two predictors, say \(x_1\) and \(x_2\), and both tests have high p-values.

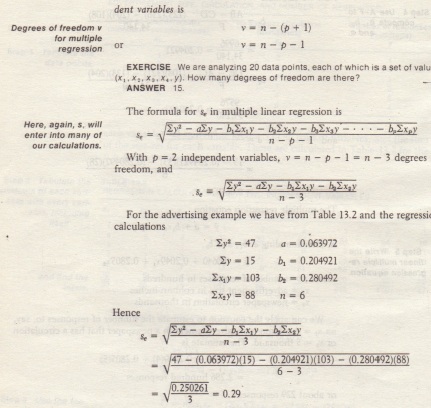

Multiple linear regression, in contrast to simple linear regression, involves multiple predictors and so testing each variable can quickly become complicated. Note that the hypothesized value is usually just 0, so this portion of the formula is often omitted. A population model for a multiple linear regression model that relates a y-variable to p -1 x-variables is written as The standard error of a regression slope is a way to measure the uncertainty in the estimate of a regression slope.